Python PDF Scraping – How to Extract PDF Files from Websites

Learn how to scrape and download PDF files from the web.

DataOx professional team shares its

Python PDF scraping texhniques.

Ask us to scrape the website and receive free data sample in XLSX, CSV, JSON or Google Sheet in 3 days

Scraping is the our field of expertise: we completed more than 800 scraping projects (including protected resources)

Table of contents

Estimated reading time: 4 minutes

Introduction to Python PDF Scraping

There is a great amount of information on the web provided in PDF format which is used as an alternative for paper-based documents. Thanks to its great compatibility across different operating systems and devices, it’s one of the most commonly used data formats today.

However, the content in PDF format is often unstructured, and downloading and scraping hundreds of PDF files manually is time-consuming and rather exhausting. In this article, we’ll explore the process of downloading data from PDF files with the help of Python and its packages. So, let’s move on and discover this PDF scraper for free!

How to Scrape Data from PDF Documents

Before getting deeper into coding with Python, let’s have a look at the other methods that can be used for extracting PDF data:

- Copy/paste method,

- Manual data extraction outsourcing,

- PDF convertor tools usage,

- Automated PDF data extraction tool (OCR softwares).

How to Scrape all PDF Files from a Website

In this part, we’ll learn how to download files from a web directory. We’re going to use BeautifulSoup – the best scraping module of Python, as well as the requests module. As usually, we start with installing all the necessary packages and modules.

The next step is to copy the website URL and build an HTML parser using BeautifulSoup, then use the requests module to get request.

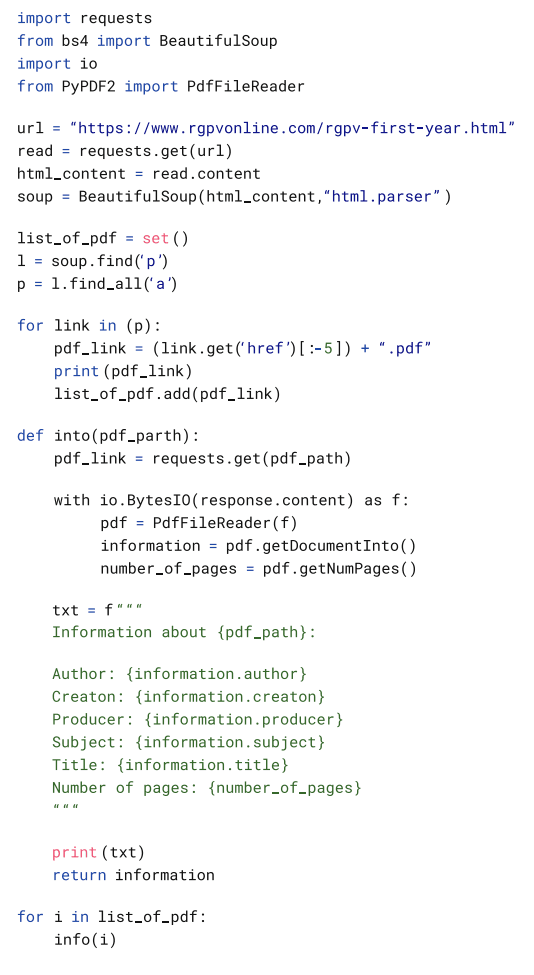

After that, we need to look through the PDFs from the target website and finally we need to create an info function using the pypdf2 module to extract all the information from the PDF. The complete code looks like this:

How to Get URLs from PDF Files

In this section, we are going to learn how to extract URLs from PDF files with Python. For this purpose, we’ll use PyMuPDF and pikepdf libraries by applying two methods:

- To extract annotations like markups, and notes, and comments that redirect to the browser when you click on them.

- To extract the whole raw text and parse URLs by using regular expressions.

Before starting, it’s necessary to install the following libraries:

Getting URLs from annotations

For this method, we’ll use the pikepdf library. We need to open a PDF file and go through all annotations to identify if there is an URL:

You can use any PDF file, just be sure that it has clickable links. After running the code, you will get the output with links:

Getting URLs through regular expressions

In this method, we will get all the raw text from a PDF file and parse URLsafter that using regular expressions. First, we need to get the text version of our PDF file:

The next step is to parse the URLs from the text by running the following module.

The output will be the following:

Common Python Libraries for PDF Scraping

Here is the list of Python libraries that are widely used for the PDF scraping process:

- PDFMiner is a very popular tool for extracting content from PDF documents, it focuses mainly on downloading and analyzing text items.

- PyPDF2 is a pure-python library used for PDF files handling. It enables the content extraction, PDF documents splitting into pages, document merging, cropping, and page transforming. It supports both encrypted and unencrypted documents.

- Tabula-py is used to read the table of PDF documents and convert into pandas’ DataFrame and also it enables to convert PDF files into CSV/JSON file.

- PDFQuery is used to extract data from PDF documents using the shortest possible code.

The Key Challenges of PDF Files Scraping

The extraction of enormous amounts of data stored in online PDF documents might be a big challenge for business owners, since it’s time-consuming, costly, and often inefficient if done manually.

The alternative to manual scraping is building an in-house PDF scraper. This approach is better but still has its complications, like various formats maintenance, anti-scraping traps handling, data structuring and formatting, etc.

We know that most PDF documents are scanned and scrapers fail to understand them without Optical Character Recognition application. So, another solution is to get OCR software that is a more comprehensive solution for extracting data from PDFs. Such automated PDF scrapers have a combination of OCR RPA, pattern and text recognition, as well as other useful techniques for PDF data extraction handling.

Python PDF Scraping – FAQ

How to scrape and download PDF from website with Python?

For starters, you should install the two necessary modules: BeautifulSoup and PdfReader. BeautifulSoup is used to check website URLs and parse HTML. After that, you should import the files to PdfFileReader to save them in the end format.

How to extract PDF from website?

You can manually extract PDF files presented on a web page by right-clicking them, pressing the ‘save as; button, and downloading them to your PC. If this option is unavailable, click on the mouse’s right button and choose the ‘inspect’ option. Look through the code and find the embed/iframe source URL that ends with .pdf. Copy it and place it in a new tab or window of your browser. Thus you will see the source PDF file that can be easily downloaded.

What are PDF scrapers?

PDf scrapers are tools to find, convert and extract data from PDF files. Examples of offline and online PDF scraping software are DocParser, Apify, DocSumo, and FineReader.

Conclusion

At times you may need to download over a hundred PDF files from the web or maybe other types of scanned documents like invoices, financial reports, purchase orders, or presentations. In such situations, you might require some professional help to do it automatically.

At DataOx we are always ready to provide you with expert-level services and professional advice. Just schedule a free consultation with our expert and trust your web scraping tasks to our professional team.

Publishing date: Sun Apr 23 2023

Last update date: Wed Apr 19 2023