Web Scraping with Login Required – How to Get Content from Websites Requiring Login Details

Read this post and ask the DataOx experts

to learn how to scrape a website that requires login

with Python, ParseHub or BowerBI.

Ask us to scrape the website and receive free data sample in XLSX, CSV, JSON or Google Sheet in 3 days

Scraping is the our field of expertise: we completed more than 800 scraping projects (including protected resources)

Table of contents

Estimated reading time: 4 minutes

Introduction to Web Scraping with Login Required

We know that data requiring a login to access is not public as a rule, which means that sharing and using it for commercial purposes can be illegal. Hence, before scraping data from such web sources, you should always check the legality.

In web scraping to collect data from web sources that require login is one of the common issues. So what can you do about it?

Keep reading, and you will learn how to scrape a website that requires login using ParseHub.

What Should you Check Before Scraping a Website?

If you are thinking about data scraping and want to handle it yourself by building a scraping bot or using data scraping tools, the first thing is to check the following points:

- Is it legal?

- Check the sitemap of the target website.

- Analyze the content and the size of the target website.

- Check copyright limitations.

- Choose where to store.

- Decide on scraping technology.

Introducing ParseHub

ParseHub is a powerful web scraper designed for data collection from many web sources like JavaScript or AJAX sites. It offers such features as scheduled scraping, IP rotation, attribute extraction, etc.

And of course, thanks to ParseHub, you can overcome the most common issues as the web login screen that you might encounter while scraping.

Getting Started

So, before starting to scrape websites that require passwords, make the following steps:

- Read the terms and conditions of the web source to protect you from further complexity because such restrictions usually have particular reasons.

- Download and install the ParseHub tool from here.

- Register a new Gmail account for your future scraping purposes.

How to Scrape a Website with Login Page

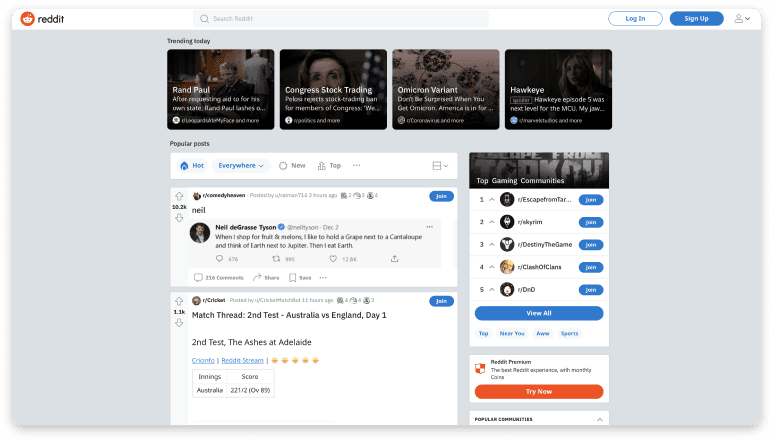

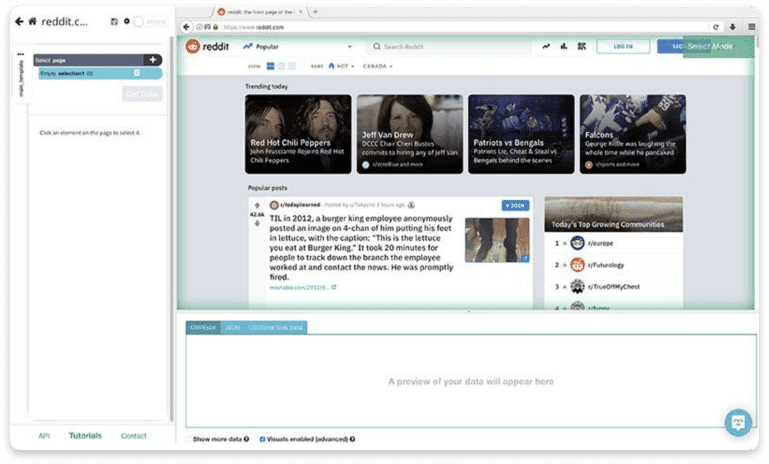

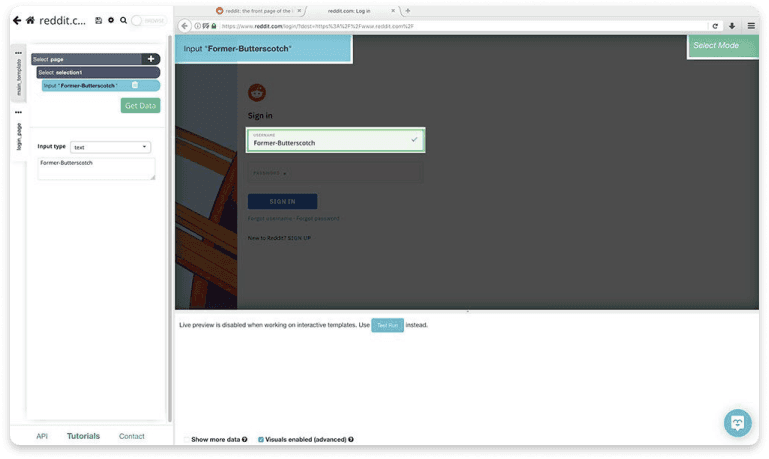

As an example of harvesting a page requiring authorization, we’ll consider Reddit.com.

- Run ParseHub and enter the URL of the target website.

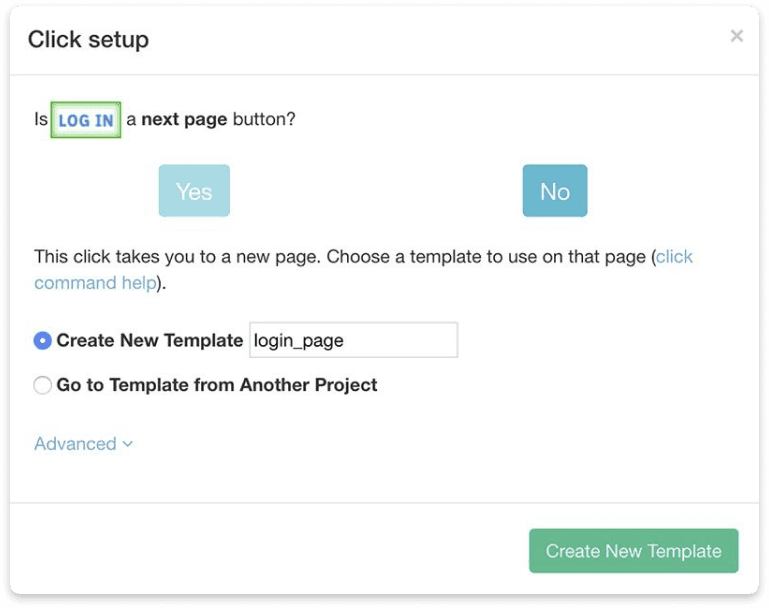

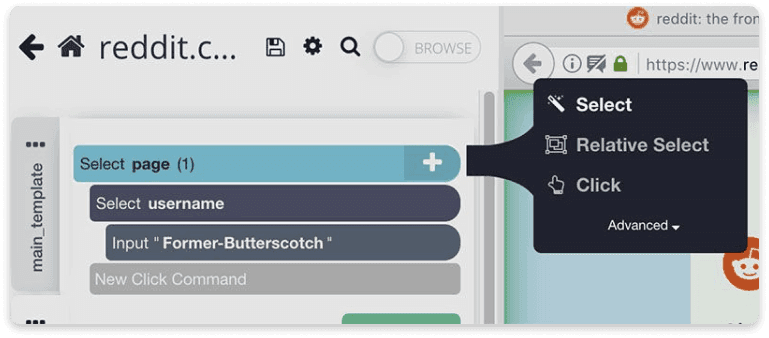

- Select Log In button by clicking on it and rename it to login in the left sidebar. Click on the (+) button and select the Click command.

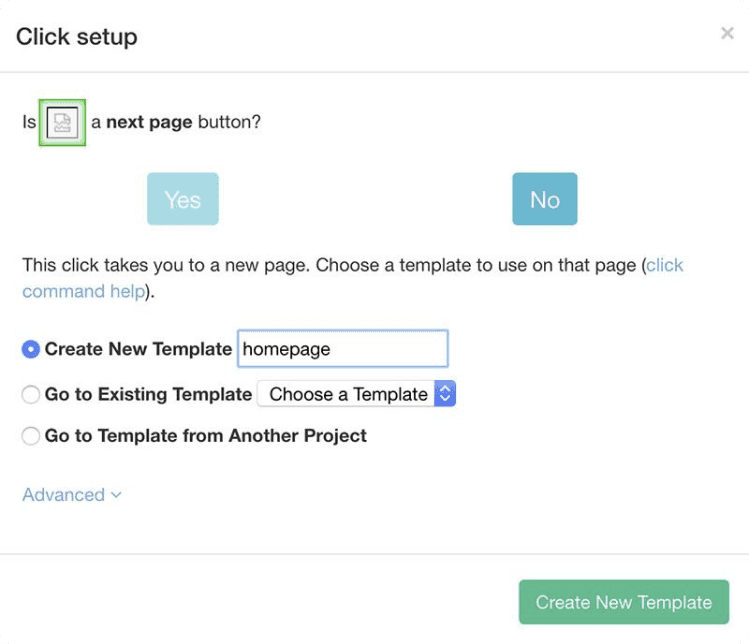

- In the pop-up window, click on the No button and create a new template by naming it the login_page. Then it will open a new browser tab and scrape the template.

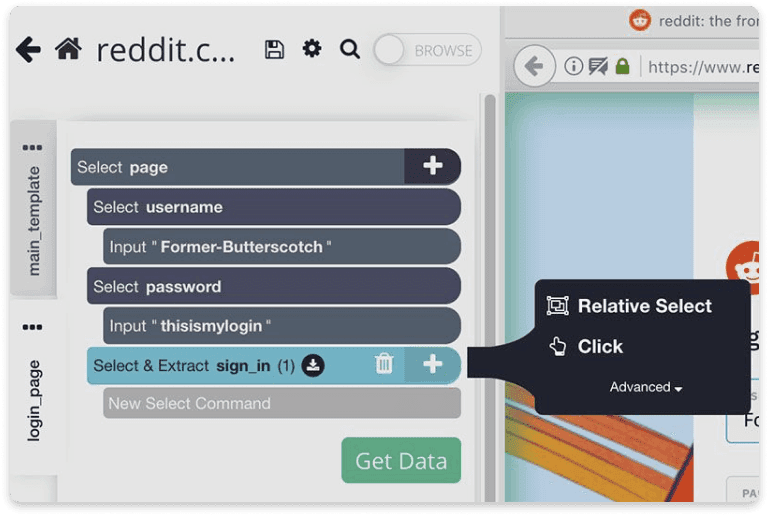

- Click on the Username field, type your username, and change the selection name to a username.

- Click on the (+) button and click on the Select command.

- Next, click on the Password field, enter your password, and change the name of the selection to password.

- Click on the (+) button and click on the Select command.

- The same we’ll do with Sign In. Click on Sign In and change the selection name correspondingly to sign_in.

- Click on the (+) button and click on the Click command.

- In the appeared pop-up window, click on No, and create a new template by naming it the homepage.

Now you know how it is simple to skip any login web page while scraping using ParseHub, so you can go ahead with your scraping project as we did above.

How to Copy Data from a Protected Web Page

Although your goal is to extract information for further data analysis and not plagiarism, you need to know that many websites are protected from copy-pasting their data.

Check out the top methods to overcome this protection:

- Disabling JavaScript from browser settings;

- Applying for special extensions;

- Copying text from source code;

- Using inspect elements;

- Taking a screenshot and extracting text from images.

Hiring Data Scraping Service Provider

Many largest companies trust their scraping projects to data scraping service providers, mainly when the project implies such challenges as

scraping at a scale, complex websites or if the target pages require login.

Besides, most information that should be extracted is unstructured or protected via anti-scraping mechanisms. And, please don’t forget to consider legal issues as well. That is where a good service provider comes into play!

Web Scraping with Login Required FAQ

How to get data from a website that requires a login?

The easiest way to do web scraping with the login required is to use ready-made tools like ParseHub. It allows setting a regular command to fill out your login details and get content from the target website. A more proficient way is using Python, however, which requires much coding experience. That’s why, for complex tasks, you can use online tools or hire DataOx experts.

How to do a web scraping login with Python?

You can scrape websites that require login with Python in combination with BeautifulSoup. What you need to do is to use the same cookies and headers every time you make HTTP requests after the login. Open the developer tools in your browser, visit the target website and sign in. Switch to the network tab, proceed to side requests, right-click and cope as cURL. Convert the cURL into Python requests and proceed to the scraping process with the receives cookies and headers.

How to do Power BI web scraping with login?

The desktop version of PowerBI has a separate section with the data sources. However, complex web scraping for websites requiring login details is not included in the tool. You can do it if you can create a custom data connector using M Language.

Closing Thoughts on Web Scraping with Login Required

So, if you are going to handle your web scraping project by yourself, keep in mind all challenges listed in the previous section. But, if you choose to trust it to professionals, keep in mind DataOx experts who are always ready to help you with any scraping job.

Schedule a free consultation with our expert to reveal the complete list of our web scraping services and learn how DataOx can help you scrape web sources that require login credentials.

Publishing date: Sun Apr 23 2023

Last update date: Wed Apr 19 2023